I think we are speaking of two things that do not have anything to do each other.

I did finish to setup the server, just to prove that what I am saying is true.

I canstream over WebRTC to the server, Publish and Playing is working correctly.

The issue I was refering to, is still present.

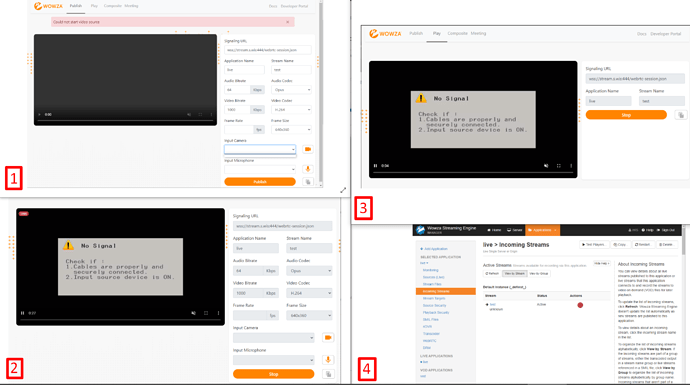

I cannot post more than one screenshot, so here is a big one:

[1] Publish page just after loading:

As you can see, the drop down are emtpy, with the previously mentioned error.

I cannot choose the camera/microphone to use.

[2] Publish Page after clicking Publish:

It does stream the “default” camera device in Google Chrome. (HDMI Capture Card)

[3] Play page correctly showing my stream:

[4] StreamEngineManager correctly show the stream is ACTIVE:

Also, i MUST leave the Frame Rate input empty, otherwise the stream does not even start, returning the same Could not start video source error.

As I said, there is a issue in the Javascript, somewhere, that prevents my devices to show up in the dropdowns.

Edit: The issue was indeed the FrameRate parameter.

I think that’s because some Virtual Devices doesn’t expose the FrameRate paramether, and completely removing it from the example code fixed the issue.