What Is Low Latency? Meaning, Use Cases, and Streaming Protocols Explained

Here’s a dirty secret: When it comes to streaming, it’s rare that “live” actually means live. Say you’re at home watching a live streamed concert, and you see an overly excited audience member jump onstage. Chances are that event occurred at the concert venue some 30 seconds prior to when you saw it on your screen. That’s why achieving low latency is critical for real-time streaming experiences.

Why? Because it takes time to pass chunks of data from one place to another. This delay between when a camera captures video and when the video is displayed on a viewer’s screen is called latency. That said, latency can vary and low-latency streaming efforts can reduce this delay to the sub-five-second range.

In this guide, we’ll explore the meaning of low latency, how it compares to high latency, when it matters, and which streaming protocols are best equipped to deliver fast, reliable video.

Table of contents

- What Does Latency Mean?

- What Causes Latency?

- Low Latency vs. High Latency: What’s the Difference?

- What Is Low Latency? (Definition & Meaning)

- Is Lower Latency Always Better?

- Why is Low Latency Important?

- What Category of Latency Fits Your Scenario?

- Use Cases For Low Latency Streaming

- How Is Low Latency Achieved?

- Protocols for Low-Latency Streaming

- FAQ: Low Latency Explained

- Conclusion

What Does Latency Mean?

Latency is the time it takes for computer data to be processed over a network connection. In video streaming, this refers to the delay between when a video is captured and when it’s displayed on a viewer’s device.

Passing chunks of data from one place to another takes time, so latency builds up at every step of the streaming workflow. The term glass-to-glass latency is used to represent the total time difference between source and viewer. Other terms, like “capture latency” or “player latency,” only account for lag introduced at a specific step of the streaming workflow.

What Causes Latency?

You could just as well ask “what causes traffic on the highway?” It takes time for anything, even digital information, to get from one place to another. When you add in various stops along the way, that lag can add up, creating larger delays between recording raw data and displaying it on a playback device.

So, what stops must your video data make before reaching its destination? Raw data must be encoded, a process that involves compressing and formatting media files for transfer over a network. These files then typically go to a streaming server, which allows them to be transcoded (unencoded, altered according to size or resolution, and re-encoded) and/or transmuxed (changed to a different protocol for delivery). Finally, they may go through a content delivery network (CDN) on their way to various playback devices.

Of course, much like traffic, latency can also be affected by things like bandwidth and physical distance. As cars bottleneck when lanes are reduced, so too can data. CDNs can be helpful in overcoming the latter. Adaptive bitrate streaming (ABR) can help reduce latency by dynamically adjusting video quality based on the viewer’s bandwidth and device capabilities. This allows for faster start times and less buffering, especially in situations with variable internet speeds or weaker connections.

Get the Low-Latency Streaming Guide

Understand the critical capabilities required to provide interactive video experiences.

Download PDFLow Latency vs. High Latency: What’s the Difference?

- Low latency typically refers to under five seconds of delay between capture and playback.

- High latency can stretch to 30 seconds or more, which is common in HLS or DASH streams.

The key difference? Low latency prioritizes immediacy, which is critical for interactivity, while high latency favors quality and scalability — ideal for passive viewing like news or entertainment.

What Is Low Latency? (Definition & Meaning)

So, if several seconds of latency is normal, then what is considered low latency? Speaking broadly, it refers to minimal data processing delays over a network. When it comes to streaming, low latency describes a glass-to-glass delay of five seconds or less.

That said, not every streaming method or protocol can accomplish low latency. The popular Apple HLS streaming protocol defaults to approximately 30 seconds of latency (more on this below), while traditional cable and satellite broadcasts are viewed at about a five-second delay behind the live event.

What’s more, it’s a largely subjective term with many not-quite-synonyms out there in the digital ether. Some people require even faster delivery than is typically promised by low latency. For this reason, separate categories like ultra-low latency and near real-time streaming have emerged. These refer to latency coming in under one second, or sub-second latency, and are usually reserved for some, like two-way chats and real-time device control (think live streaming from a drone). These cases involve feedback on the viewer’s side, which can be greatly affected by even a few seconds of latency.

To explore current trends and technologies around low-latency streaming, Wowza’s Justin Miller, video producer, sits down with Barry Owen, our chief solutions architect, in the video below.

Is Lower Latency Always Better?

Not always. While some real-time applications like auctions, gaming, or two-way video require lower latency (or even ultra-low latency), there can be some tradeoffs:

- Higher complexity

- Lower scalability

- Potential video quality sacrifices

For passive experiences — like streaming a concert or a recorded webinar — a few extra seconds of delay is often preferable if it means better video quality and fewer playback issues.

Why is Low Latency Important?

As evidenced by the discussion of ultra-low latency above, not every streaming use case requires the same degree of “real time.” Naturally, nobody specifically wants high latency, but in which cases does low latency truly matter?

A slight delay is often not problematic. In many cases, matching a standard broadcast latency of 5-7 seconds is ideal. In a 2021 Video Streaming Latency Report, 53% of broadcasters indicated they were experiencing a latency in the 3-45 second range.

Returning to our concert example, it’s often irrelevant if 30 seconds pass before viewers find out that the lead guitarist broke a string. In fact, it may even be preferable if certain broadcast companies want the option to cut video feed in advance of certain events. Additionally, attempting to deliver the live stream quicker can introduce complexity, costs, scaling obstacles, and failure opportunities. Sometimes, it’s just not worth the hassle.

What Category of Latency Fits Your Scenario?

You’ll want to differentiate between multiple categories of latency and determine which is best suited for your streaming scenario. These categories include:

- Near real time for video conferencing and remote devices

- Low latency for interactive content

- Reduced latency for live premium content

- Typical HTTP latencies for linear programming and one-way streams

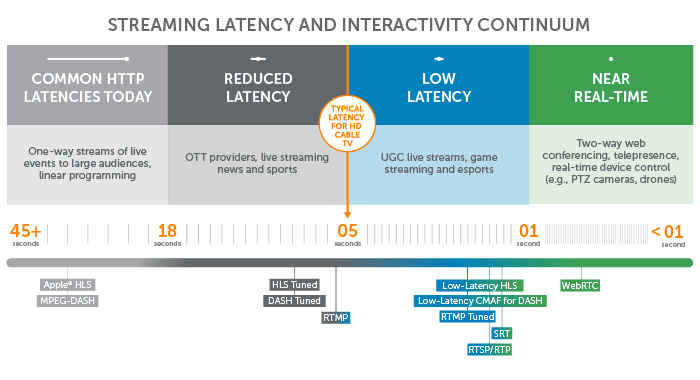

You’ll notice in the chart below that the more passive the broadcast, the less latency matters.

You’ll also notice that the two most common HTTP-based protocols (HLS and MPEG-DASH) are at the high end of the spectrum. So why are the most popular streaming protocols also sloth-like when it comes to measurements of glass-to-glass latency?

Due to their scalability and adaptability, HTTP-based protocols ensure reliable, high-quality experiences across all screens. This is achieved with technologies like buffering and adaptive bitrate streaming – both of which improve viewing experience by increasing latency.

Balancing Latency With Quality

High resolution is all well and good, but what about scenarios where a high-quality viewing experience requires lightning-fast delivery?

Say you’re streaming a used car auction. Timely bidding will take precedence over picture quality any day of the week. In cases like this, getting the video where it needs to go quickly is more important than 4K resolution. More examples to follow.

Use Cases For Low Latency Streaming

Let’s take a look at a few streaming use cases where minimizing video lag is undeniably important.

Second-Screen Experiences

If you’re watching a live event on a second-screen app (such as a sports league or official network app), you’re likely running several seconds behind live TV. While there’s inherent latency for the television broadcast, your second-screen app needs to at least match that same level of latency to deliver a consistent viewing experience.

For example, if you’re watching your alma mater play in a rivalry game, you don’t want your experience spoiled by social media comments, news notifications, or even the neighbors next door celebrating the game-winning score before you see it. This results in unhappy fans and dissatisfied (often paying) customers.

Today’s broadcasters are also integrating interactive video chat with large-scale broadcasts for things like watch parties, virtual breakout rooms, and more. These multimedia scenarios require low-latency video streaming to ensure a synced experience free of any spoilers.

Video Chat

This is where ultra-low latency “real-time” streaming comes into play. We’ve all seen televised interviews where the reporter is speaking to someone at a remote location, and the latency in their exchange results in long pauses or the two parties talking over each other. That’s because the latency goes both ways. Maybe it takes a full second for the reporter’s question to make it to the interviewee, but then it takes another second for the interviewee’s reply to get back to the reporter. These conversations can turn painful quickly.

When true immediacy matters, about 500 milliseconds of latency in each direction is the upper limit. That’s short enough to allow for smooth conversation without awkward pauses.

Betting and Bidding

Activities such as auctions and sports-track betting are exciting because of their fast pace. And that speed calls for real-time streaming with two-way communication.

For instance, horse-racing tracks have traditionally piped in satellite feeds from other tracks around the world and allowed their patrons to bet on them online. Ultra-low-latency streaming eliminates problematic delays, ensuring that everyone has the same opportunity to place their bets in a time-synchronized experience. Similarly, online auctions and trading platforms are big business, and any delay can mean bids or trades aren’t recorded properly. Fractions of a second can mean billions of dollars.

Video Game Streaming and Esports

Anyone who’s yelled “this game cheats!” (or more colorful invectives) at a screen knows that timing is critical for gamers. Sub-100-millisecond latency is a must. No one wants to play a game via a streaming service and discover that they’re firing at enemies who are no longer there. In platforms offering features for direct viewer-to-broadcaster interaction, it’s also important that viewer suggestions and comments reach the streamer in time for them to beat the level.

Remote Operations

Any workflow that enables a physically distant operator to control machines using video requires real-time delivery. Examples range from video-enabled drill presses and endoscopy cameras to smart manufacturing and digital supply chains.

For these scenarios, any delay north of one second could be disastrous. Maintaining a continuous feedback loop between the device and operator is also key, which often requires advanced architectures leveraging timed metadata.

Real-Time Monitoring

Coastguards use drones for search-and-rescue missions, doctors use IoT devices for patient monitoring, and military grade bodycams help connect frontline responders with their commander. Any lag could mean the difference between life and death, making low latency critical for these applications.

Real-time monitoring is also well entrenched in the consumer world – powering everything from pet and baby monitors to wearable devices and baby monitors. Whether communicating with the delivery person via a doorbell cam or monitoring the respiratory rate of a newborn, many of these video-enabled devices let viewers play an active role.

Interactive Streaming and User-Generated Content

From digital interactive fitness to social media sites like TikTok, interactive streaming is becoming the norm. This combination of internet-based video delivery and two-way data exchange boosts audience engagement by empowering viewers to influence live content via participation.

For example, Peloton incorporates health, video, and audio feedback into an ongoing experience that keeps their users hooked. Likewise, e-commerce websites like Taobao power influencer streaming in the retail industry. End-users become contributing members in these types of broadcasts, making speedy delivery essential to community participation.

How Is Low Latency Achieved?

Achieving low latency requires tuning multiple parts of your streaming pipeline:

- Reduce segment size in protocols like HLS, LL-HLS, DASH, or LL-DASH

- Use real-time protocols like WebRTC or SRT

- Optimize encoding settings for speed over compression

- Limit buffering and prefetching on the player side

Tradeoffs include lower video quality and reduced scalability, so the right balance depends on your use case.

How Does Low-Latency Streaming Work?

Now that you know what it is and when it’s important, you’re probably wondering, how can I deliver lightning-fast streams?

As with most things in life, low-latency streaming involves tradeoffs. You’ll have to balance three factors to find the mix that’s right for you:

- Encoding efficiency and device/player compatibility

- Audience size and geographic distribution

- Video resolution and complexity

The streaming protocol you choose makes a big difference, so let’s dig into that.

Apply HLS is the most widely used protocol for stream delivery due to its reliability – but it wasn’t originally designed for true low-latency streaming. As an HTTP-based protocol, HLS streams chunks of data, and video players need a certain number of chunks (typically three) before they start playing. If you’re using the default chunk size for traditional HLS (6 seconds), that means you’re already lagging significantly behind. Customization via tuning can cut this down, but your viewers will likely experience buffering the smaller you make those chunks.

Protocols for Low-Latency Streaming

Luckily, emerging technologies for speedy delivery are gaining traction. Here’s a look at the fastest low-latency protocols currently available.

SRT

SRT is popular for use cases involving unstable or unreliable networks. As a UDP-like protocol, SRT is great at delivering high-quality video over long distances, but it suffers from player support without a lot of customization. For that reason, it’s more commonly used for transporting content to the ingest point, where it’s transcoded into another protocol for playback.

Benefits:

- An open-source alternative to proprietary protocols.

- High-quality and low-latency.

- Designed for live video transmission across unpredictable public networks.

- Accounts for packet loss and jitter.

Limitations:

- Not natively supported by all encoders.

- Still being adopted as newer technology.

- Not widely supported for playback.

WebRTC

WebRTC is growing in popularity as an HTML5-based solution that’s well-suited for creating browser-based applications. This open-source technology allows for low-latency delivery in a browser-based, Flash-free environment. However, it wasn’t designed for one-to-many broadcasts.

Streaming solutions, such as Wowza’s Real-Time Streaming at Scale, allow broadcasters to leverage the ultra-low latency capabilities of WebRTC while streaming to up to a million viewers. And because WebRTC can be used from end-to-end, it’s gaining traction as both an ingest and delivery format.

Benefits:

- Easy, browser-based contribution

- Supports interactivity at 500-millisecond delivery

- Can be used end-to-end for some use cases

Limitations:

- Not the best option for broadcast-quality video contribution due to certain features to enable near real-time delivery

- Difficult and typically more expensive to scale than HLS or DASH

Low-Latency HLS

Low-Latency HLS is the next big thing when it comes to low-latency video streaming. The spec promises to achieve sub-two-second latencies at scale — while also offering backward compatibility to existing clients. Large-scale deployments of this HLS extension require integration with CDNs and players, and vendors across the streaming ecosystem are working to add support.

Benefits:

- All the benefits of HLS — with lightning-fast delivery to boot

- Ideal for interactive live streaming at scale

Limitations:

- Can only get down to about 3-5 seconds of latency, so it wouldn’t be ideal for ultra low latency needs.

Low-Latency DASH

Similar to Low-Latency HLS, Low-Latency CMAF for DASH is an alternative to traditional HTTP-based video delivery. It was developed by DASH-IF (now the SVTA) with the promise of delivering super fast video at scale.

Benefits:

- All the benefits of MPEG-DASH, plus low-latency delivery

Limitations:

- Doesn’t play back on as many devices as HLS because Apple doesn’t support it

RTMP

RTMP delivers high-quality streams efficiently and remains in use by most broadcasters for speedy video contribution. However, it has disappeared from the publishing end of most workflows due to incompatibility with HTML5 playback devices.

Benefits:

- Low latency and well established

- Supported by most encoders and media servers

- Required by many social media platforms for ingest

Limitations:

- RTMP has died on the playback side and thus is no longer an end-to-end technology.

- As a legacy protocol, RTMP ingest will likely be replaced by more modern, open-source alternatives like SRT

Become a Streaming Protocol Expert

Learn about low-latency formats, technology updates, and much more.

SubscribeFAQ: Low Latency Explained

Q: What does latency mean in streaming?

A: Latency is the delay between when a video is captured and when it’s displayed — usually measured in seconds.

Q: What is low latency?

A: It refers to minimal streaming delay — typically under five seconds — to support real-time or interactive experiences.

Q: Is lower latency better?

A: Not always. It’s better for real-time interaction, but can reduce quality or scalability.

Q: Why is low latency needed?

A: It’s essential for many live applications. Ultra low latency or even real time streaming are especially needed for applications like video chat, esports, remote control, and live betting — where every second counts.

Q: How is low latency achieved?

A: Through faster protocols, sub-segment delivery, optimized encoding, and minimal buffering.

Q: What’s the difference between low latency and high latency?

A: Low latency prioritizes speed and interactivity. High latency favors quality, scale, and reliability.

Conclusion

For whatever low-latency protocol you choose to employ, you’ll want streaming technology that provides you with fine-grained control over latency, video quality, and scalability — while offering the greatest flexibility. That’s where Wowza comes in.

With a wide variety of features, projects, and protocol-compatibilities, there’s a Wowza low-latency solution for every use case. Whether you’re broadcasting with Wowza Video or Wowza Streaming Engine, we have the technology to get your stream from camera to screen with unmatched speed, reliability, quality, and resiliency.